DIME EVENTS

We look forward to collaborating with the DiMe community at in-person and virtual events to exchange ideas and foster innovation to ignite change across the digital health ecosystem.

Sign up for our monthly newsletter today to hear about the latest DiMe event updates and other important news!

Advancing a core measure set for common mental health disorders for clinical research and care

Virtual | 11 a.m. ET

June 4, 2026

US Pharma and Biotech Summit

New York, NY (USA)

May 14, 2026

Enjoy a 20% discount with code: DIME

The GLP-1 revolution: Measuring what matters beyond weight loss

Virtual | 11 a.m. ET

April 29, 2026

AI x Next Generation Clinical Trials

Boston, MA (USA)

April 22 – 23, 2026

Take 10% off with code: AING26DIME

ViVE 2026

Los Angeles, CA (USA)

Feb 22 – 25, 2026

Enjoy a $250 discount with code: V26_DIME250

DiMe Webinar: Transforming clinical trials with sensor-based digital health technologies (sDHT)

Virtual | 11 a.m. ET

February 4, 2026

DiMe Launch: A new era for pediatric rare diseases: Adopting evidence-based digital measures to improve patient outcomes

Virtual | 11 a.m. ET

Jan 29, 2026

Hlth Masterclass: Designing Evidence that Pays: Aligning Value Between Innovators and Payers

Virtual | 11 a.m. ET

Jan 28, 2026

SCOPE Summit 2026

Orlando, FL (USA)

Feb 2 – 6, 2026

Enjoy a $200 discount with code: PO200

Biotech Showcase

San Francisco, CA (USA)

Jan 12 – 14, 2026

Enjoy a $200 VIP discount with code: supDIME2025

Register here

Healthcare 2030: Building Massively Better Healthcare

Virtual | 11 a.m. ET

December 2, 2025

Building the future of hospital-at-home: Strategies for sustainable, scalable care

Virtual

June 4, 2025

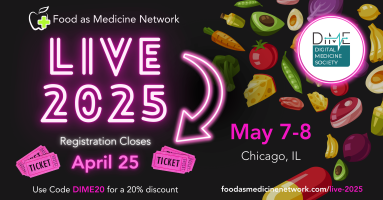

Food as Medicine Network: LIVE 2025

Chicago, IL

May 7 – May 8, 2025

20% Discount code: DIME20

Digital Health Nexus Conference

San Francisco, CA (USA)

May 7 – 8, 2025

DIME-20 for 20% off

Unlocking impact through investment: Building the business case for digital endpoints

Virtual

March 26, 2025

Integrated Evidence Plans: Streamlining evidence for commercial success to drive broad acceptance of Digital Health Technologies

Virtual

March 18, 2025

Breaking Barriers with Digital Health Technologies: Advancing CRS Risk Prediction

Virtual

February 13, 2025

DiMe Webinar: Showcasing biotech success stories: digital endpoints in clinical trials

Virtual

January 28, 2025

Biotech Showcase

San Francisco, CA (USA)

January 13 – 15, 2025

$200 off discount code: DIME200

Insights into New Quality Benchmark for MedTech Products: An Independently Awarded Seal of Evidence, Usability, Privacy, Security & Equity

Virtual

December 12, 2024 | 12 p.m. ET

Accelerating the Path to Approval: Validating Novel Digital Clinical Measures

Virtual

December 10, 2024 | 11 a.m. ET

Scaling Digital Health Globally: Navigating National Pathways for Patient Access

Virtual

November 19, 2024 | 10 a.m. ET

Transforming Alzheimer’s Disease and Related Dementia Research: New Core Digital Clinical Measures

Virtual

November 14, 2024 | 11 a.m. ET

Introducing the DiMe Seal: An Information Session for Developers

Virtual

Thur., Oct 31, 2024 | 11:00am ET

DiMe Journal Club: Regulatory Pathways for Qualification and Acceptance of Digital Health Technology-Derived Clinical Trial Endpoints: Considerations for Sponsors

Virtual

October 30, 2024 | 11 a.m. ET

Introducing the DiMe Seal: An Information Session for Providers, Health systems, and Payors

Virtual

Tues., Oct 29, 2024 | 11:00 am – 12:00 pm ET

World Drug Safety Congress Americas

Boston, MA (USA)

October 29-30, 2024

50% off discount code: DIME50

DiMe Journal Club: Net financial benefits of digital endpoints publication in Clinical and Translational Science

Virtual

October 16, 2024 | 11 a.m. ET

DiMe Journal Club: Wearable Digital Health Technology

Virtual

October 3, 2024 | 11 a.m. ET

ConV2X Telehealth Symposium entitled “Blending Health Technology to Meet Patient Needs

New York City, NY, USA

October 1-2 2024

Advancing Equity and Reducing Financial Toxicity in Cancer Care: Resources to Quantify and Maximize the Value of Digital Patient Navigation

Virtual

August 27, 2024 | 11 am ET

Public Workshop | Using Patient Generated Health Data in Medical Device Development: Case Examples of Implementation Throughout the Total Product Lifecycle

Virtual

June 26-27, 2024

Digital Measurement of Nocturnal Scratch: New Developments: Processes, Validation, and Adoption

Virtual

June 18 | 11 a.m. ET

Digital Measurement of Nocturnal Scratch: New Developments | Updates from R&D on Algorithms and Tools

Virtual

June 11 | 11 a.m. ET

Digital Measurement of Nocturnal Scratch: New Developments: Recent Regulatory Feedback

Virtual

June 4 | 11 a.m. ET

DiMe Webinar: With tightening digital health funding and escalating expectations, how can innovators efficiently construct evidence for their products?

Virtual

May 21, 2024 | 11am EST

DiMe Webinar Sleep Launch: Advancing Sleep Research: New Core Digital Measures & Resources

Virtual

April 24 | 11am ET

DiMe Webinar: Clinical trial efficiency using data and digital health tech

Virtual

April 18 | 11 am ET

Data-Driven, Hybrid, and Fully Decentralized Clinical Trials Event

Philadelphia, PA (USA)

April 16 – 17, 2024

P24DCTDIME = 15% off

Journal Club: Defining the Dimensions of Diversity to Promote Inclusion in the Digital Era of Healthcare

Virtual

March 27, 2024

V3+: An extension to the V3 framework to ensure user-centricity and scalability of sensor-based digital health technologies

Virtual

Feb 27, 2024 | 11am ET

DiMe Journal Club: The promise of artificial intelligence (AI) and machine learning (ML) for improving clinical outcomes

Virtual

February 15, 2024 | 11 am ET

SCOPE Summit 2024

Orlando, FL (USA)

Feb 11-14, 2024

For a discount use DIME ($200 off) when registering.

DiMe Journal Club: Incorporating digitally derived endpoints within clinical development programs by leveraging prior work

Virtual

January 18, 2024 | 11 am ET

J.P. Morgan 42nd Annual Healthcare Conference

San Francisco, California (USA)

Jan 8-11, 2024